This DevSecOps statistics report pulls together 2026 market data, industry research, and a lightweight client pulse to give organizations one place to reference the numbers that shape DevOps and cybersecurity decisions.

The statistics don’t always match across sources because they measure different layers, so we call out what each report actually captures and avoid blending survey signals with observed data. The goal is to translate the statistics into practical delivery guidance for development teams shipping in multi-cloud environments: what to gate, what to automate, and what evidence you need to keep security explainable.

TL;DR: DevSecOps statistics for 2026

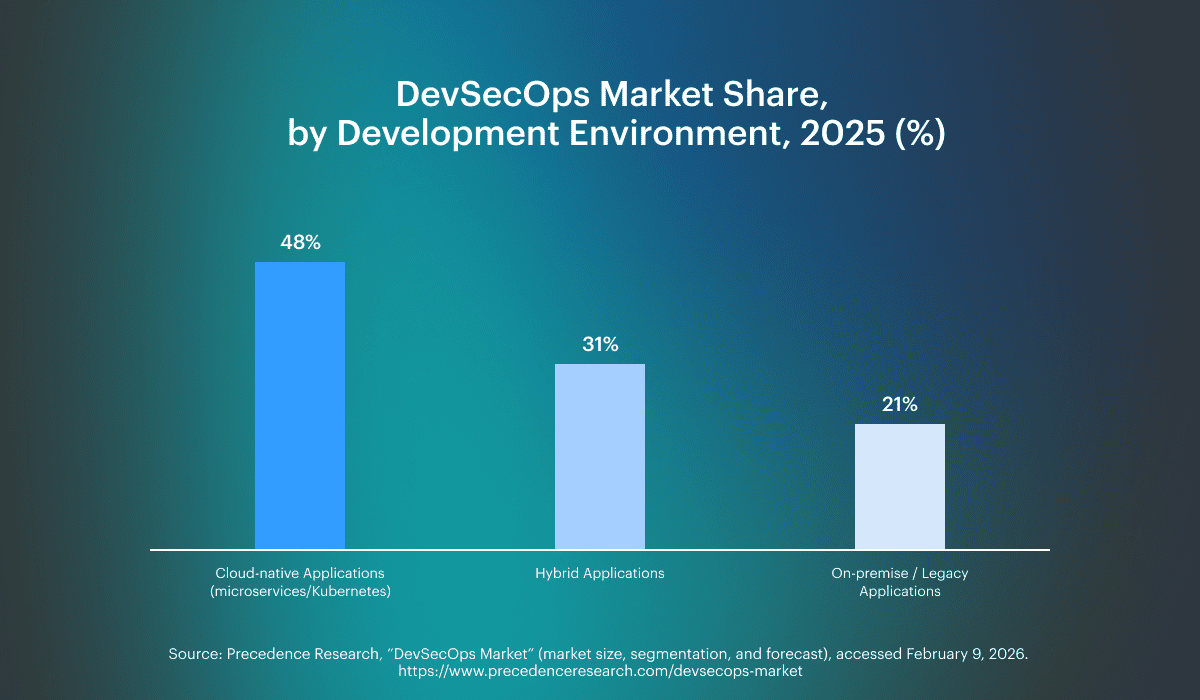

- 48% of the DevSecOps market is driven by cloud-native applications, and 28% by secure CI/CD automation (segment shares, 2025). Precedence Research

- 60.1% of category spend is software (not services), which signals a shift toward platformized controls (2024). Grand View Research

- 36% of organizations develop software using DevSecOps, and 60% of rapid teams embed these practices (adoption snapshot). StrongDM

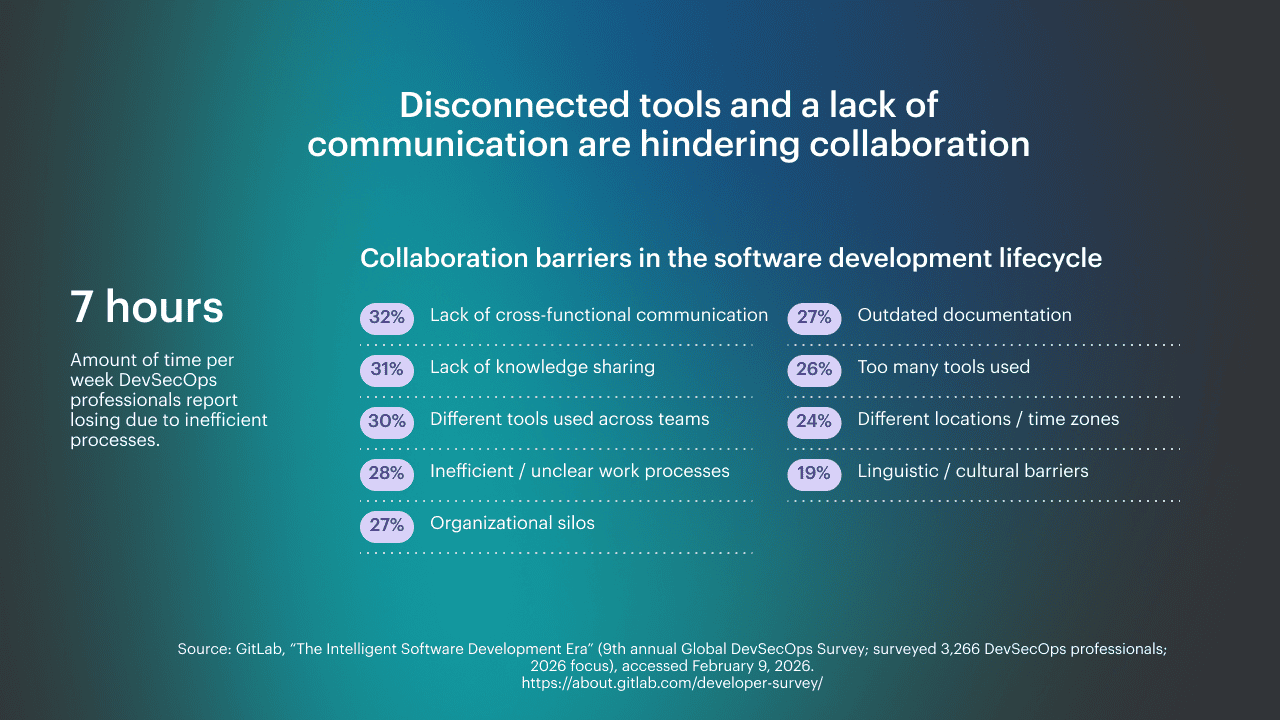

- Practitioners report losing ~7 hours/week to inefficient processes, which is a measurable platform ROI anchor. GitLab DevSecOps report

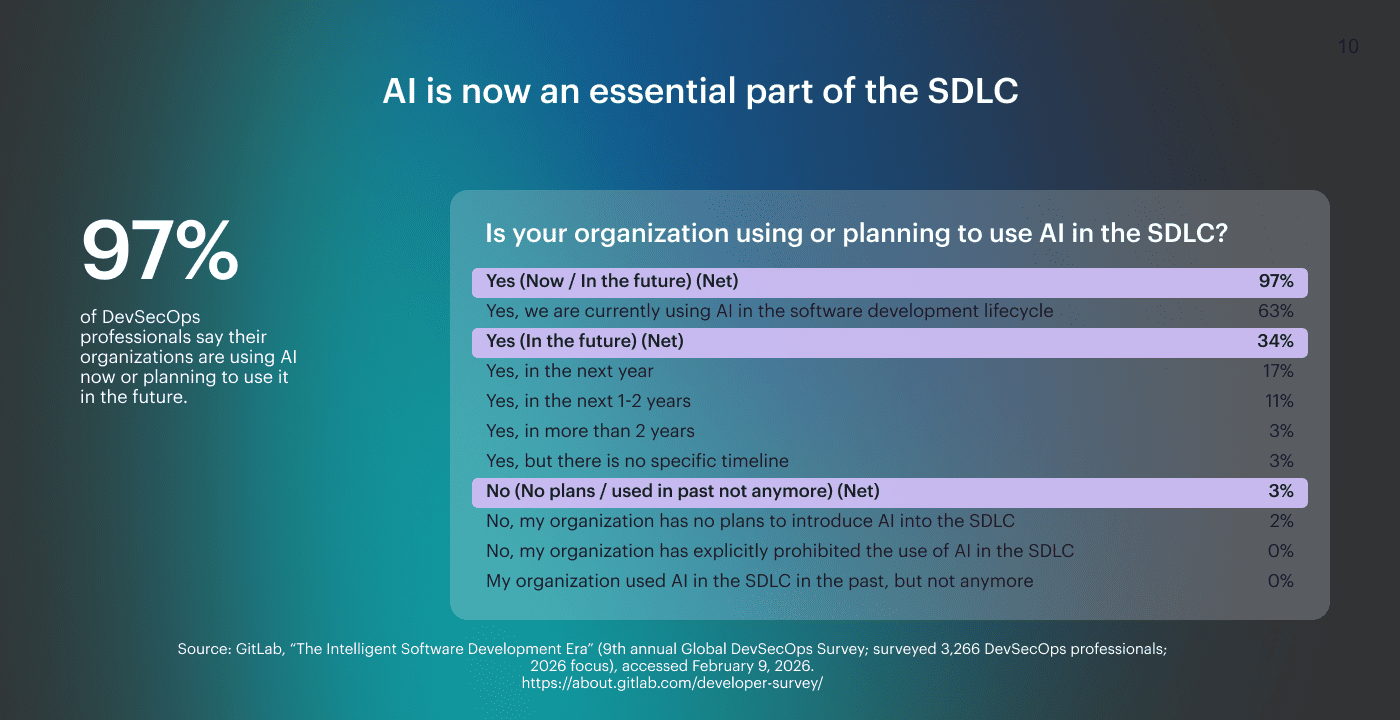

- 97% are using or planning to use AI in the SDLC, so governance must cover AI-assisted change flow. GitLab DevSecOps report

- 85% say agentic AI works best with platform engineering, which ties AI gains to standardized gates, evidence, and ownership. GitLab DevSecOps report

This page treats these statistics as operating constraints for DevSecOps teams shipping across cloud environments: what gets gated, what gets automated, and what gets proven when leadership asks, “How do we know we’re safe?”

How we built 2026 DevSecOps report

This 2026 report is built from sources that show their methodology, including surveys with named respondents and observed datasets measured in real environments.

A lightweight client pulse adds field notes from US organizations, based on short interviews with engineers about where evidence, ownership, and drift break down in multi-cloud delivery. The same lens is applied to every stat: delivery risk, auditability, and the engineering hours spent on day-to-day cybersecurity work.

Survey signals and observed telemetry are kept separate, so one number never pretends to describe two different layers of reality.

Primary sources include GitLab survey data, Precedence Research, Grand View Research, and StrongDM.

Segment shares: cloud-native applications reached 48%

Cloud-native applications account for 48% of the DevSecOps market by development environment, and secure CI/CD pipeline automation accounts for 28% by use case. Precedence Research Those two numbers are the “security is moving into delivery” signal. When the biggest segment is cloud-native delivery, and the biggest use case is automated CI/CD, the market statistics are basically saying the same thing teams feel day to day: security can’t stay a separate stage if you want a predictable release flow across environments.

Those two numbers are the “security is moving into delivery” signal. When the biggest segment is cloud-native delivery, and the biggest use case is automated CI/CD, the market statistics are basically saying the same thing teams feel day to day: security can’t stay a separate stage if you want a predictable release flow across environments.

What it means for your org: Treat security controls as pipeline outcomes, not tool outputs. In development, standardize allow, warn, and block decisions and make evidence automatic across each cloud environment, so releases scale without turning audits into archaeology.

Read also: DevSecOps vs CI/CD - How to Build a Secure CI/CD Pipeline

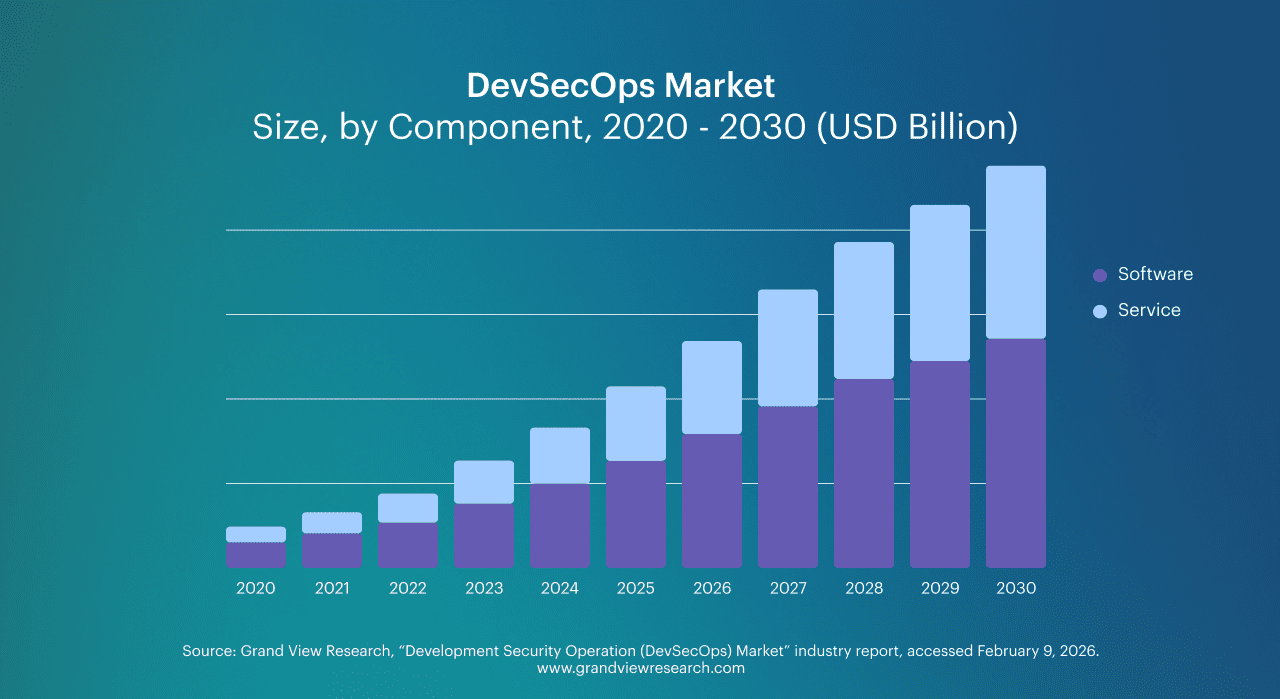

DevSecOps market spend: software takes the 60% share

Software represents 60.1% of the DevSecOps market revenue share. Grand View Research

That split is the “capabilities over consulting” signal. When most spend goes to software, buyers are paying for repeatable system behavior, not one-off implementation help.

That split is the “capabilities over consulting” signal. When most spend goes to software, buyers are paying for repeatable system behavior, not one-off implementation help.

In DevSecOps terms, that means policy evaluation, automated controls, and evidence that can survive audits and incident reviews. These statistics also explain why toolchain conversations keep drifting toward platforms. If the outcome has to be consistent across teams and environments, the system has to encode decisions, not rely on people remembering them.

What it means for your org: Treat cybersecurity governance as a product you run, not a project you finish. Invest in mechanisms that turn intent into enforced outcomes: policy-as-code, consistent gates, and evidence capture wired into delivery. If you cannot prove what happened and why, you will keep paying for manual reconciliation in security and release management.

What these DevSecOps market numbers mean for CTO budgeting

Stats of the DevSecOps market point to the same budgeting conclusion: you are paying for repeatable outcomes, not extra headcount to reconcile tools. For most organizations, the hidden cost is not licenses. It is the engineers’ time spent on manual approvals, duplicate scans, and rebuilding evidence during audits. So budget accordingly.

Key companies will all claim coverage, but the evaluation questions should be boring and specific:

- Can the platform standardize, allow, warn, and block decisions across pipelines?

- Can it link every change to evidence and ownership?

- Can it compare posture across clouds without manual stitching?

If the answer is no, then you are buying more dashboards, not reducing delivery risk.

DevSecOps adoption is uneven by organization size

36% of respondents currently develop software using DevSecOps (up from 27% in 2020), and 60% of rapid development teams embedded DevSecOps practices in 2021 (up from 20% in 2019). StrongDM

DevSecOps is no longer niche, but it is not consistently engineered as a system across portfolios. In practice, DevOps speed is already normalized, while governance often stays team-specific and manual, which is where drift, exceptions, and audit pain accumulate.

What it means for your org: Assume mixed capability across organizations and business units, even when everyone says they “do DevSecOps.” Treat baseline controls as a contract for development teams, define, allow, warn, and block outcomes by environment, and make evidence and ownership non-negotiable. That is how you scale without turning delivery into a compliance bottleneck.

Read also: DevSecOps vs DevOps. What’s the Difference?

A minimal multi-cloud baseline leaders can enforce (AWS/Azure/GCP)

When the baseline differs by team, controls become negotiable and audits become manual. The fix is a small contract that applies to every service, every pipeline, and every cloud environment, including AWS.

Start with six controls:

- Secrets detection

- SCA

- SBOM generation

- Artifact signing and provenance

- IaC and IAM policy checks

- Release evidence that captures who approved what and why

Treat application security as part of the delivery system. Define outcomes per environment (warn in dev, require approval in staging, block in prod), keep evidence automatic and change-linked, and include access paths that bypass code review.

DevSecOps security baseline: over 50% run SAST

Over 50% of teams run SAST, 44% run DAST, and roughly 50% run container and dependency checks as part of their stack. Practical Devsecops

In the real world, one engineer on Reddit describes getting 847 vulnerabilities dumped from scans “with zero context.”

The baseline exists, but it is not consistent enough to produce comparable outcomes across teams. When one pipeline blocks on a DAST finding and another does not even run DAST, the same class of risk gets treated differently, and posture becomes impossible to defend at the portfolio level.

What it means for your org: Treat scanners as inputs into a small set of gate decisions that every team follows. Standardize what “block” means for production, require an owner for every high-impact finding, and tie evidence to the change so you can explain why risky code shipped.

For engineers, the win is fewer surprise queues and a smaller, actionable set of vulnerabilities that actually affect running applications, not a growing backlog that nobody trusts.

Read also: SecDevOps vs DevSecOps. Differences, Security Models, and When to Choose

Security posture in 2026: outcomes, evidence, and ownership

A usable security posture is not a dashboard of findings, but a set of enforced decisions you can explain later. For most organizations, posture comes down to three things:

- Outcomes: consistent allow, warn, and block rules tied to environments

- Evidence: the who, what, when, and why captured automatically at the gate, not reconstructed from chat logs

- Ownership: every control result has a named team that can fix it or justify an exception

This is also where prioritization gets real. If you can’t tie posture to access paths and release records, you end up with blind spots that look fine in tooling but fail during audits and incidents.

Process friction costs about 7 hours per week

DevSecOps professionals lose about 7 hours per week to inefficient processes. GitLab DevSecOps report This is not “admin time.” It is coordination time created by fragmented workflows, unclear handoffs, and decisions that happen outside the delivery system. In multi-team environments, that time shows up as queuing, rework, and waiting for approvals that are not encoded as gates.

This is not “admin time.” It is coordination time created by fragmented workflows, unclear handoffs, and decisions that happen outside the delivery system. In multi-team environments, that time shows up as queuing, rework, and waiting for approvals that are not encoded as gates.

The number matters because it scales linearly. Multiply it by headcount, and you get a budget line, not a complaint.

What it means for your org: Treat this as platform ROI. Reduce the number of places where release decisions are made, and move them into repeatable controls that produce evidence by default. Standardize who can approve what, and make access changes traceable to a change record.

In a multi-cloud setup, the goal is fewer decision points and fewer manual reconciliations, so engineers ship without slowing down for security archaeology. For organizations, this is how you convert time loss into latency reduction.

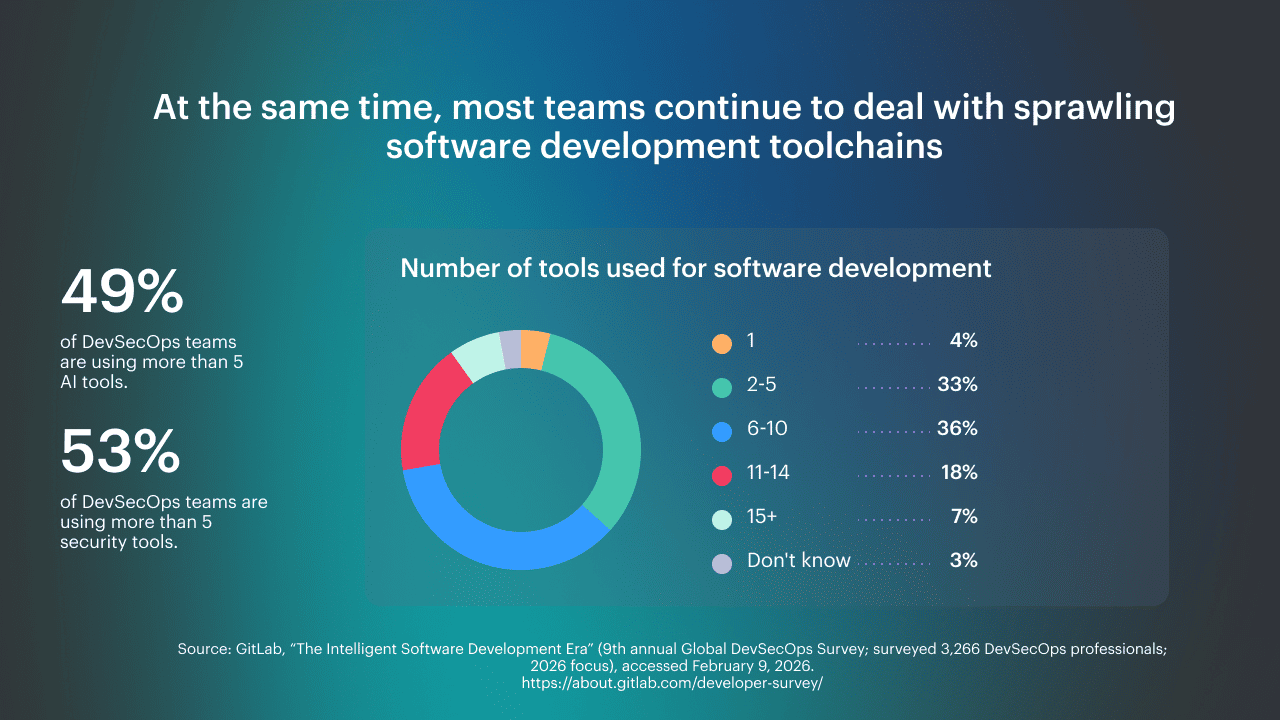

Tool sprawl in DevSecOps: 5+ security tools is the new normal

GitLab reports that 60% of professionals use more than 5 tools for software development, and 49% use more than 5 AI tools. GitLab survey press release The problem is that each tool becomes its own authority for pass, fail, severity, and approval, and evidence ends up scattered. In multi-team delivery, the result is predictable: inconsistent thresholds, duplicated checks with conflicting outputs, and release decisions that drift into chat.

The problem is that each tool becomes its own authority for pass, fail, severity, and approval, and evidence ends up scattered. In multi-team delivery, the result is predictable: inconsistent thresholds, duplicated checks with conflicting outputs, and release decisions that drift into chat.

What it means for your org: Define a single allow, warn, and block model that applies to every pipeline and every cloud environment, then make tools feed those decisions instead of redefining them.

Require ownership and a change-linked audit trail for every high-impact exception, and make access changes part of the same evidence model. If security decisions are not consistent and reconstructable, shipping code at scale will always create governance debt for large organizations.

The DevSecOps operating model

A working toolchain is a decision system, not a pile of scanners. A control detects a condition in code or config, a gate turns it into an outcome, enforcement applies it consistently, and evidence captures who approved what and why. This removes handoffs because decisions live in the pipeline, not in chat. It also prevents archaeology audits because the trace is generated at the moment of decision.

In development, this maps cleanly to PR gates, deploy gates, and runtime drift checks that also cover access changes. The goal is simple: make security outcomes predictable and explainable. For the full model and examples, see our DevSecOps toolchain guide.

AI in the SDLC is the default path for most organizations

63% say they are currently using AI, and 34% say they plan to use it in the future.

Within the “future” group, 17% plan to adopt in the next year, 11% in the next 1 to 2 years, and 3% in more than 2 years. GitLab DevSecOps report It proves, that AI is now a delivery input. Two-thirds already have it in the workflow, and a third are onboarding it on a defined timeline, which means change volume is rising predictably.

It proves, that AI is now a delivery input. Two-thirds already have it in the workflow, and a third are onboarding it on a defined timeline, which means change volume is rising predictably.

What it means for your org: Treat AI-assisted code as normal change flow. In development, encode guardrails as gates and collect evidence automatically, or security becomes manual friction that teams bypass. The outcome you want is consistent governance that holds up in cybersecurity reviews when velocity increases.

Read also: What Breaks in Delivery When DevSecOps vs SDLC is Misunderstood

AI governance without blocking delivery

Treat AI like any other change source. The goal is an approved path that is easier than going off-road, backed by security awareness training and clear data boundaries.

Log what matters: prompts and context classification, model and tool used, and the reviewer who approved the change. Put gates where they actually shape outcomes. PR gates cover review and provenance for AI-assisted code, build gates capture SBOM and signing, and deploy gates enforce environment policy across each cloud account, including access constraints.

Exceptions should behave like controlled changes. Require an owner, an expiry date, and a review cadence, so “temporary” does not become permanent.

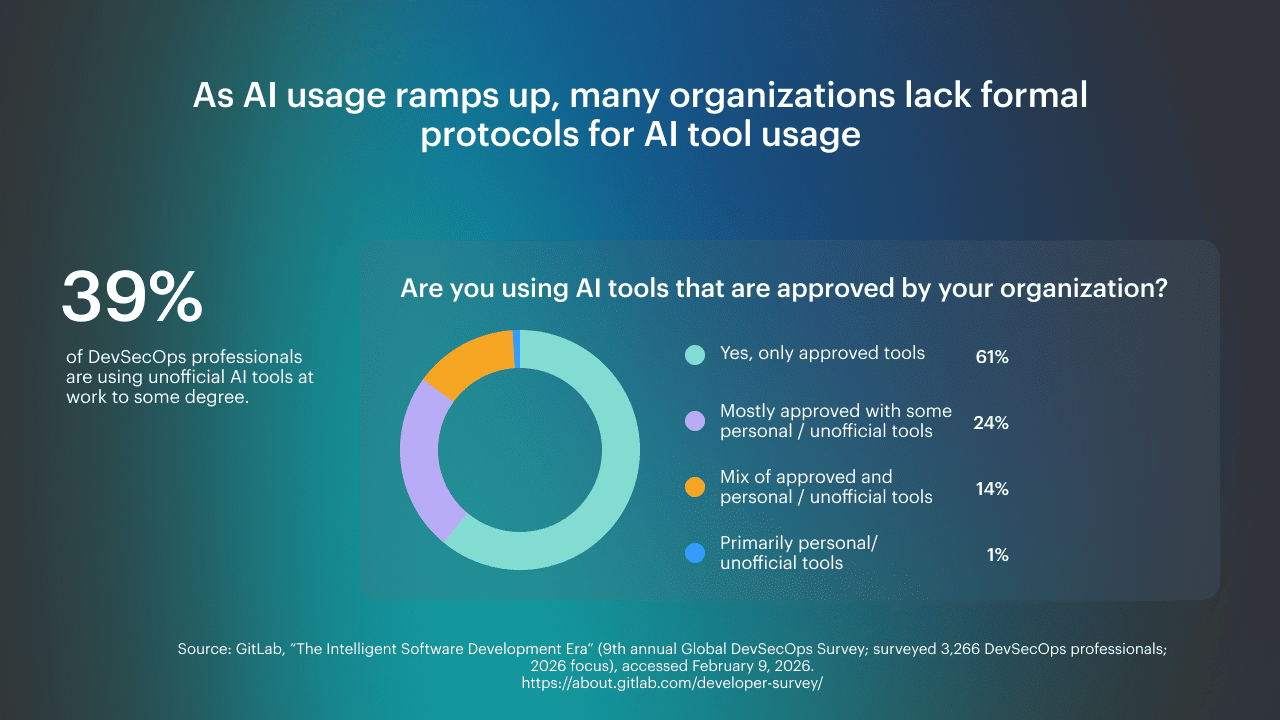

Shadow AI adoption exposes the provenance gap

39% of DevSecOps professionals use unofficial AI tools at work to some degree.

In the same question, 24% say they are mostly approved with some personal or unofficial tools, 14% report a mix of approved and personal or unofficial tools, and 1% say they primarily use personal or unofficial tools. GitLab DevSecOps report Even teams with an approved stack still have leakage into personal tools, which means parts of the decision trail live outside the system of record. The risk is not just data loss. It is that you cannot reconstruct why something changed when you need to explain it.

Even teams with an approved stack still have leakage into personal tools, which means parts of the decision trail live outside the system of record. The risk is not just data loss. It is that you cannot reconstruct why something changed when you need to explain it.

What it means for your org: Provide an approved path that captures prompts and context classification, ties AI-assisted code to review, and stores evidence with the change.

Make AI usage part of your release record, then align access boundaries so data and environments are not exposed through side channels. That is how organizations keep security defensible when AI becomes routine.

Multi-cloud DevSecOps trends for 2026: what converges across reports

Across sources, the DevSecOps trends are less about new scanners and more about system design.

- First, AI pushes change volume up, so teams move from “review everything” to “gate what matters” with consistent outcomes.

- Second, multi-cloud delivery forces evidence and ownership to be portable. If posture depends on one account’s tooling, it collapses the moment a workload shifts to another provider.

- Third, identity becomes the control plane. The fastest way to bypass good pipeline work is still to access paths that are not tied to change records.

- Finally, cybersecurity is being measured by explainability, not coverage.

A mature setup can answer even months later, what was approved, what was deployed, and why production still matches intent.

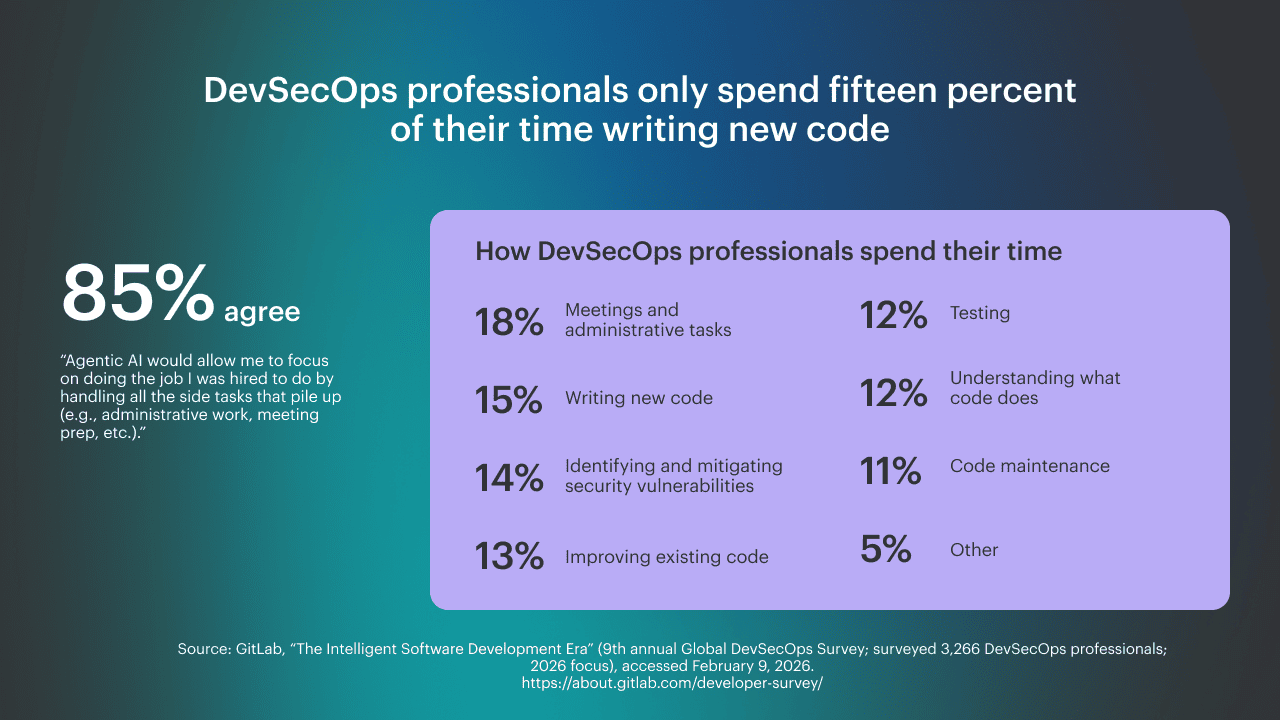

Agentic AI works when platform engineering is in place

85% agree that agentic AI would let them focus on the work they were hired to do by handling side tasks.

In the same survey, DevSecOps professionals report they spend 15% of their time writing new code, 18% on meetings and admin, 14% identifying and mitigating security vulnerabilities, and 13% improving existing code. GitLab DevSecOps report The “platform engineering” conclusion is baked into the time split. Most lost time is not deep engineering. It is coordination, context switching, and chasing artifacts across systems. Agentic AI can reduce that, but only if the delivery system is standardized. Otherwise, it just creates more actions that nobody can trace or govern.

The “platform engineering” conclusion is baked into the time split. Most lost time is not deep engineering. It is coordination, context switching, and chasing artifacts across systems. Agentic AI can reduce that, but only if the delivery system is standardized. Otherwise, it just creates more actions that nobody can trace or govern.

What it means for your org: For organizations shipping in multi-cloud, agentic AI only helps when pipelines, policies, and evidence are consistent.

Make approvals and exceptions part of the system, not side conversations. Give engineers a paved path where security rules are encoded as gates, and where every automated action leaves a trace tied to the change. That is how DevSecOps scales autonomy without scaling drift.

DevSecOps trends for 2026: what shifts next?

- Global market growth: The global DevSecOps market is estimated at USD 8,841.8M (2024) and projected to reach USD 20,243.9M by 2030 (CAGR 13.2% from 2025-2030). Precedence Research

- North America concentration: North America dominated the global DevSecOps market with a 35.2% revenue share in 2024. Precedence Research

- Software-led spend: Software accounted for USD 6,552.4M revenue in 2024, and software held a 60.1% revenue share in 2024, reinforcing that the category is buying enforcement and automation as product capability. Grand View Research

- Where growth is heading: Supply chain security is called out as the fastest-growing segment in 2026-2035, which matches what teams feel as dependency and provenance risk becomes operational, not theoretical. Grand View Research

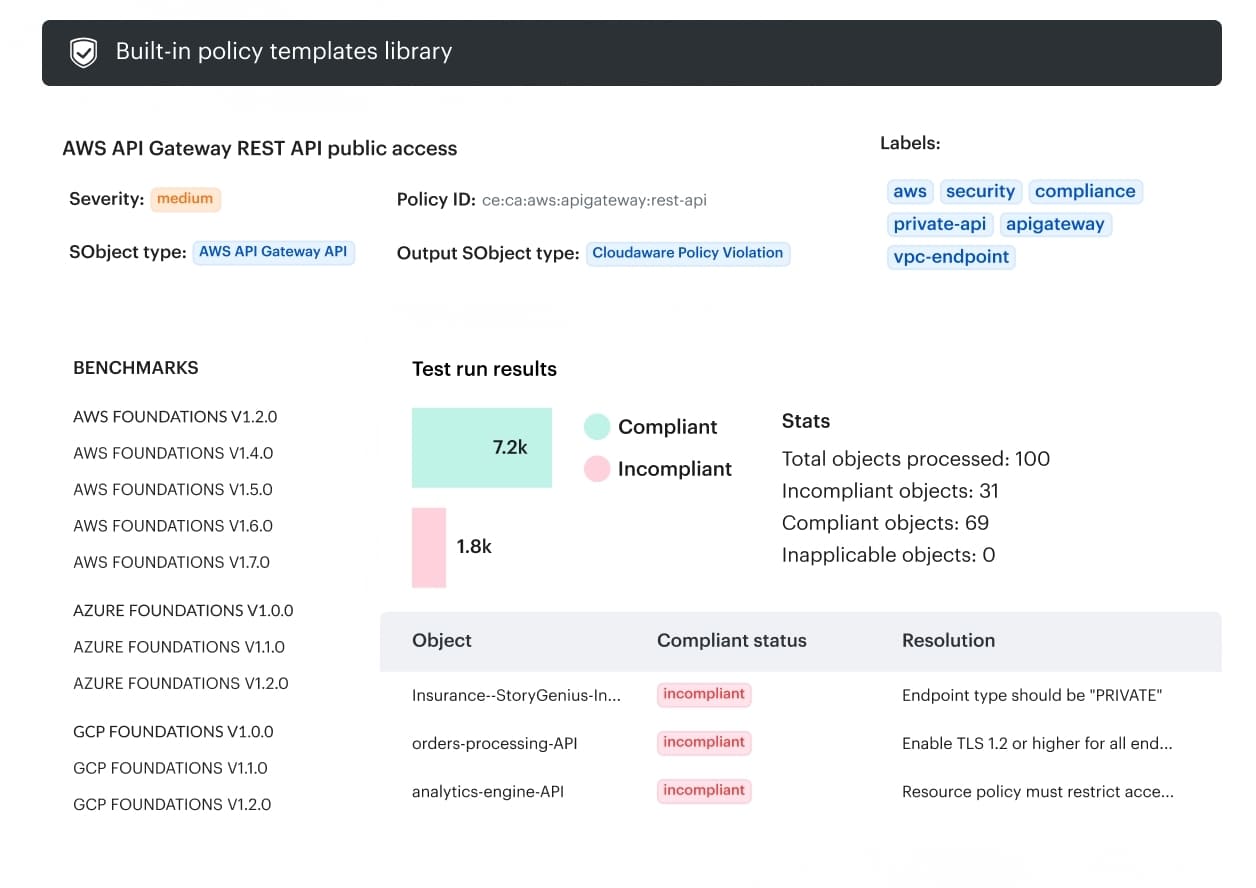

How Cloudaware unifies evidence and ownership across multi-cloud DevSecOps

Cloudaware helps keep DevSecOps decisions traceable across pipelines and cloud accounts. It centralizes the minimum audit trail teams need to answer “what changed, who approved, what policy evaluated it, and what is the current state,” without stitching evidence by hand. The core capabilities include:

The core capabilities include:

- Context-based approvals routing across multi-cloud: approvals can be routed by account, user group, or environment across AWS/Azure/GCP, inc. hybrid environments such as Oracle and VMware.

- Time-based approvals and workflow notifications: supports time-based approvals (change windows) and workflow notifications in Slack, Jira, ServiceNow, and PagerDuty.

- Approval status in CMDB plus audit logging: approval statuses appear in the CMDB, and every approval, rejection, and change is logged, including who signed off and when.

- Audit logs and custom reporting for infrastructure and IAM changes: provides multi-cloud and hybrid audit logs and custom reports for changes, including firewall changes and IAM tweaks.

- Baselines and configuration drift tracking with change history: supports baselines and tracking what changed, when, and why, including approval-based baselines and asset attribute change tracking.

- Go/no-go policies for release governance: supports go/no-go policies for infrastructure and code releases using violations data, including blocking promotion of non-compliant changes.